Most people use them every day, yet few realize they are the engines powering a global revolution. In 2026, Large Language Models (LLMs) have shifted from experimental research curiosities to the essential infrastructure of our digital lives. But what exactly are these models, and why have they become the most critical technology of our decade? This article strips away jargon to explain how LLMs work, their explosive growth in every industry, and how they are redefining the boundary between human and machine intelligence.

What Are Large Language Models?

Large Language Models (LLMs) are the advanced engines of modern AI, designed to predict, generate, and understand human language with near-human fluency. Unlike traditional AI that relies on rigid, task-specific rules, LLMs are general-purpose systems built on a massive scale, utilizing trillions of parameters and training data that spans nearly the entire digital history of human thought. This immense computational scale allows them to recognize complex patterns and context far beyond simple word matching.

How Large Language Models Work

The transformer architecture explained simply

The “Attention” Mechanism: The breakthrough that allows models to weigh the importance of every word in a sentence simultaneously, rather than reading linearly.

Encoder & Decoder: The dual-engine system where the Encoder converts your prompt into a mathematical “map” and the Decoder translates that map back into human language.

Parallel Processing: Unlike older AI, Transformers process entire blocks of text at once, making them incredibly fast and capable of spotting long-range patterns.

Pre-training: learning from the entire internet

Self-Supervised Learning: Models “read” trillions of tokens from the public internet (books, code, articles) to learn the statistical relationships between words.

Next-Token Prediction: The primary goal during this phase is simply guessing the next word in a sequence until the model understands grammar, facts, and even basic reasoning.

Fine-tuning and RLHF: aligning models with human preferences

Supervised Fine-Tuning (SFT): Experts provide high-quality “gold standard” examples of how a model should answer specific types of questions.

Reinforcement Learning from Human Feedback (RLHF): Humans rank multiple AI responses from “best” to “worst,” training a “Reward Model” that pushes the AI to be more helpful, safe, and concise.

Tokenization: how LLMs read and generate text

Chunking Text: LLMs don’t see “words”; they see tokens (fragments of words or characters). One token is roughly 0.75 English words.

Numerical Vectors: These tokens are converted into high-dimensional numbers (embeddings) so the model can perform math on the “meaning” of your text.

Context windows: why size matters for LLM performance

Active Memory: The context window is the total number of tokens (prompt + conversation history) the model can “hold in its head” at one time.

Maximum Effective Context (MECW): In 2026, while some models claim 1M+ tokens, their actual “effective” reasoning often degrades if the most important info is buried in the middle of a massive prompt.

Inference: how LLMs generate responses in real time

The Prefill Phase: The model ingests your entire prompt in one go to understand the goal.

Streaming: This is why you see the text “type out” in real-time on your screen. The model is literally thinking of the next word as it shows you the last one.

The Major Large Language Models in 2026

GPT-4o (OpenAI)

Capabilities: A fully unified multimodal model that integrates text, high-fidelity audio, and Sora-based video generation.

Strengths: Best-in-class “Computer Use” (controlling your desktop) and ultra-low latency voice interactions (0.3s response time).

Use Cases: General-purpose assistance, creative content suites, and real-time translation.

Claude (Anthropic)

Capabilities: Focuses on “Constitutional AI” and deep reasoning with a massive 1M token context window in beta.

Strengths: Widely regarded as the most “human” writer; leads in PhD-level science benchmarks and complex multi-file coding.

Use Cases: Legal document analysis, high-end editorial writing, and large-scale software architecture.

Gemini (Google)

Capabilities: Native integration with the entire Google Workspace (Docs, Gmail, Drive) and advanced video-to-text reasoning.

Strengths: Speed and “Long Context” leadership. It can process hours of video or thousands of lines of code without losing focus.

Use Cases: Researching across personal cloud data, analyzing long meetings, and automated scheduling.

Llama (Meta)

Capabilities: The premier open-weight model, offering frontier-level performance that anyone can download and run locally.

Strengths: Democratization. It provides GPT-level intelligence without the “subscription wall,” allowing for total data sovereignty.

Use Cases: Private enterprise deployments, local app development, and academic research.

Mistral

Capabilities: Optimized for efficiency and multilingual mastery (especially European languages).

Strengths: “Single-node” efficiency, delivering high performance on smaller hardware footprints with strict GDPR-aligned privacy.

Use Cases: EU-based businesses and low-latency, high-throughput commercial APIs.

Grok (xAI)

Capabilities: Direct, real-time access to the X (formerly Twitter) data stream and the “Colossus” compute cluster.

Strengths: Unfiltered “witty” personality and the fastest awareness of global breaking news.

Use Cases: Trend analysis, social media management, and “uncensored” brainstorming.

Comparison table

| Model | Primary Strength | Context Window | Best For | Pricing (API/Pro) |

| GPT-5.4 | General Utility | 400K – 1M | Daily tasks & Media | $20/mo (Plus) |

| Claude 4.6 | Writing & Logic | 200K – 1M | Expert Analysis | $20/mo (Pro) |

| Gemini 3 | Google Integration | 1M – 2M | Large-scale Research | $20/mo (Advanced) |

| Llama 4 | Open-Source | 128K+ | Self-hosting | Free (Weights) |

| Grok-3 | Real-time X Data | 128K | News & Trends | $16/mo (Premium+) |

| Herond AI | Web3 & Privacy | Browser-native | Crypto & Agents | Free / Token-gated |

Large Language Models and Agentic AI

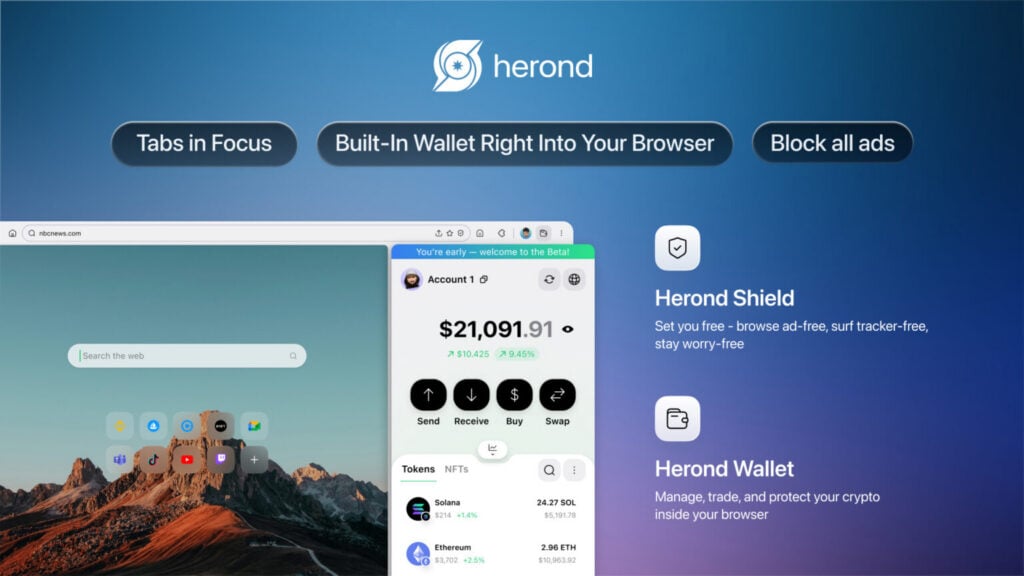

The role of LLMs in agentic browsers like Herond

- On-Chain Execution: Specifically for Web3, the LLMs translates natural language into secure smart contract interactions.

- Privacy-First “Edge” AI: Agentic browsers increasingly run smaller, optimized LLMs (SLMs) locally on your device to ensure browsing data never leaves your control.

Multi-agent systems and LLM orchestration

- Beyond the Search Bar: The “Agentic Web” is a world where websites are designed to be read by AI agents as much as humans.

- Universal Protocols: LLMs use new standards (like the Universal Commerce Protocol) to negotiate prices and complete transactions across different platforms automatically.

- Persistent Memory: 2026 agents use “Long-Term Memory” stacks (vector databases) to remember your preferences across months of interactions, making them true digital coworkers.

Why LLMs are the Cognitive Core of the Agentic Web

- Semantic Understanding: LLMs provide the “common sense” needed to navigate the messy, unorganized data of the internet to find exactly what is needed.

- Persistent Memory: 2026 agents use Vector Databases to remember your preferences across months of work, making them true digital coworkers rather than one-off tools.

Large Language Models on Web3

On-chain AI and the role of LLMs in trustless systems

- On-Chain Logic: Platforms like Internet Computer (ICP) now host AI models directly inside smart contracts, allowing for “Fully On-Chain” autonomous decision-making.

- Proof of Intelligence: Staked assets (e.g., via EigenLayer) serve as collateral to guarantee the accuracy and honesty of AI inference results across decentralized nodes.

LLM-powered smart contract analysis and security auditing

- Automated Auditing (SCALM/FORGE): LLMs use multi-layer reasoning (Syntax → Design Patterns → Architecture) to detect “bad practices” and vulnerabilities that traditional static tools miss.

- Real-Time Anomaly Detection: LLMs monitor transaction graphs and wallet clusters to flag money laundering or DeFi exploit patterns as they happen.

The convergence of LLMs and Web3 in agentic browsers

- Unified Chain Abstraction: Agentic browsers use LLMs to manage cross-chain operations, calculating the best gas fees and bridges across Ethereum, Solana, and L2s in a single step.

- Self-Sovereign Identity: Browsers use decentralized identifiers (DIDs) to verify the authenticity of AI-generated content, protecting users from “AI-native” phishing and deepfakes.

How Herond leverages LLMs for intelligent Web3 browsing

- Privacy-First Edge AI: By running optimized Small Language Models (SLMs) locally on your device, Herond ensures your sensitive wallet and browsing data never reaches a centralized server.

- Intelligent Portfolio Management: The LLM synthesizes real-time on-chain data (TVL, APR, gas trends) to suggest portfolio rebalancing or alert users to “alpha” opportunities without leaving the browser.

The Future of Large Language Models

Multimodal and omnimodal models – beyond text

- Beyond Vision & Audio: “Omnimodal” models process 3D spatial data, sensory input (haptic), and real-time 60fps video natively, rather than through separate plugins.

- Industry Specifics: Expect specialized omnimodality in healthcare (aligning imaging with genomic data) and robotics (aligning visual depth with motor control).

Smaller, faster, more efficient models – the efficiency revolution

- Small Language Models (SLMs): Models in the 3B–30B parameter range achieve 2025-era “Frontier” performance, making high-end reasoning cheap and nearly instantaneous.

- Cost Deflation: Gartner predicts a 90% reduction in LLM inference costs by 2030, driven by specialized “Inference-on-Chip” hardware.

On-device LLMs – bringing AI intelligence to the edge

- The “Goldilocks Zone”: 2027 smartphones and laptops run 7B–10B parameter models locally, ensuring zero-latency and 100% privacy for personal data.

- Offline Intelligence: AI assistants in cars, industrial tools, and wearables will maintain high-level reasoning capabilities even without a network connection.

The role of LLMs in the agentic web and Web3 ecosystem

- From Search to Action: LLMs are becoming “Web Operating Systems.” Instead of searching for information, users tell an agent to “Manage my expenses” or “Book a multi-city trip,” and it executes the steps.

- On-Chain Verification: In the Web3 space, blockchain is used to verify “AI Provenance”, proving an agent is who it says it is and that its “thinking” hasn’t been tampered with.

- Agent Wallets: AI agents will have their own Web3 wallets to pay for API calls, data, or digital services autonomously using stablecoins.

Predictions for LLM capabilities and adoption by 2030

- 100x Efficiency: Models will be 100 times more cost-efficient than 2022 versions, making intelligence a near-zero-cost commodity.

- The “Invisible” AI: By 2030, we won’t talk about “using an LLM.” AI reasoning will be embedded in every interface, from your kitchen appliances to your browser, operating silently in the background.

- Agentic Dominance: 65%+ of enterprise tasks will be handled by Multi-Agent Orchestrators rather than individual human prompts, with humans acting as “Reviewers” rather than “Doers.”

How to Choose the Right Large Language Model

Key criteria for evaluating LLMs – capability, cost, privacy, and safety

- Capability (Reasoning vs. Knowledge): Distinguish between a model’s ability to follow complex logic (Reasoning) and its internal database of facts (Knowledge).

- Cost Efficiency: Analyze the “Total Cost of Ownership,” including API input/output tokens, caching discounts, and the hardware required for self-hosting.

- Privacy & Data Residency: Ensure the model complies with local laws (GDPR, CCPA) and verify if user data is used for “recursive training.”

- Safety & Alignment: Evaluate the model’s “Constitutional AI” filters to prevent bias, hallucinations, or the generation of insecure code.

Matching LLM capabilities to specific use cases

- Content Generation: Prioritize models like Claude for natural, “human” prose and creative storytelling.

- Technical Workflows: Use high-reasoning models (e.g., GPT-5.4 or O1) for complex debugging, architectural planning, and mathematical proofs.

- Agentic Actions: Select models with high “Function Calling” accuracy for autonomous browsing or Web3 transactions.

API access vs. self-hosted models

API (Cloud): * Pros: Zero maintenance, instant access to “Frontier” intelligence, and scalable infrastructure.

- Cons: Variable latency, data privacy concerns, and potential “model drift” as providers update versions.

Self-Hosted (Local): * Pros: 100% data sovereignty, offline capability, and no per-token costs after hardware investment.

- Cons: Requires high-end GPUs (e.g., NVIDIA H100s), technical expertise, and slower updates compared to cloud giants.

Enterprise vs. Consumer LLM options in 2026

- Enterprise: Focuses on SLA guarantees, SOC2 compliance, “Admin Consoles” for team management, and “Private Clouds” where models never see public data.

- Consumer: Prioritize multimodality (voice/vision), ease of use, and integration with personal apps like Spotify, Google Calendar, or Web3 wallets.

- The Middle Ground: “Team” tiers provide a balance of advanced intelligence with shared workspace collaboration at a per-seat price.

How to stay current as the LLM landscape evolves

- Monitor Leaderboards: Track the LMSYS Chatbot Arena for “vibe-based” human rankings and Hugging Face for technical open-source benchmarks.

- Adopt “Model-Agnostic” Tooling: Use frameworks like LangChain or Vercel AI SDK so you can swap your underlying LLM in minutes as better models emerge.

- Follow “Inference” News: Watch for breakthroughs in quantization (running big models on small chips) to understand when frontier-level power hits your mobile device.

Conclusion

The journey from the first GPT models to the sophisticated reasoning of Claude and the browser-native power of Herond AI marks a fundamental shift in how we work. In 2026, Large Language Models are no longer just “chatbots”, they are the cognitive engines of an agentic web, capable of navigating complex tasks, managing Web3 assets, and protecting user privacy at the edge.

About Herond

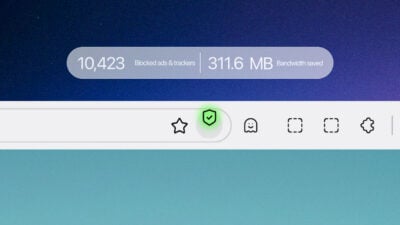

Herond Browser is a cutting-edge Web 3.0 browser designed to prioritize user privacy and security. By blocking intrusive ads, harmful trackers, and profiling cookies, Herond creates a safer and faster browsing experience while minimizing data consumption.

To enhance user control over their digital presence, Herond offers two essential tools:

- Herond Shield: A robust adblocker and privacy protection suite.

- Herond Wallet: A secure, multi-chain, non-custodial social wallet.

As a pioneering Web 2.5 solution, Herond is paving the way for mass Web 3.0 adoption by providing a seamless transition for users while upholding the core principles of decentralization and user ownership.

Have any questions or suggestions? Contact us:

- On Telegram https://t.me/herond_browser

- DM our official X @HerondBrowser

- Technical support topic on https://community.herond.org